The Race to Build the World’s Greatest Supercomputer

Part of the Dutch supercomputer ‘Cartesius’ (Photo: Dennis van Zuijlekom/Flickr)

Part of the Dutch supercomputer ‘Cartesius’ (Photo: Dennis van Zuijlekom/Flickr)

For the past two years, since June 2013, the top supercomputer in the world has been Tianhe-2. (Its name translates to Sky River—the Milky Way.) Tianhe-2 lives in Guangzhou, China, and on a benchmark test, it reached 33.86 petaflop per second. A petaflop is a measure of how fast a computer can perform—one petaflop/s is one thousand trillion operations, performed in an instant.

But Tianhe-2 may not stay at the top for long. This spring, the United States’ Department of Energy announced that it was going to spend $200 million to build the fastest supercomputer in the world, by 2018. And when that supercomputer, Aurora, first starts up, there’s no guarantee that it’ll be on top for long, either.

All around the world, countries are competing to create the world’s most powerful supercomputer—and to be the first to break into the next order of magnitude of performance, the exascale.

Tianhe-2 supercomputer in Guanghzhou, China (Photo: Sam Churchill/Flickr)

“It’s a race, analogous to the space race,” says Horst Simon, Deputy Laboratory Director of the Lawrence Berkeley National Laboratory and a cofounder of the TOP500 project, which regularly ranks the world’s supercomputers. As with the race to space, there are many, parallel reasons for the world’s governments to want to produce the best technology in this arena. “One is national prestige. There’s scientific discovery, but also national security. And there are the economic spin-off effects,” says Simon. “It’s a very competitive activity.”

For governments, scientists, mathematicians and engineers, there’s a long roster of reasons to want access to one of the world’s most powerful computers. These computers are necessary to model giant systems, like the global climate, and tiny ones, like nanoscale materials and cell-level biology. They’re used to understand how disease and supernovae work, and to imagine how the earliest formation of the universe might have happened. But these computers have also led to technologies that trickle down to the rest of us—supercomputers have brought us highly accurate weather forecasts, the programs that keep airplane seat fulls of travelers, and cars that behave better in crash tests. They’ve even been used to design consumer products from Pringles (modifying the chips’ shape just enough keep them flying off the production line) to Pampers (by modeling the multi-phase turbulence and material accumulation of absorbent diapers).

The MareNostrum supercomputer, which is housed in the Barcelona Supercomputing Center - National Supercomputing Center (BSC-CNS), Spain. (Photo: IBM Research/Flickr)

The MareNostrum supercomputer, which is housed in the Barcelona Supercomputing Center - National Supercomputing Center (BSC-CNS), Spain. (Photo: IBM Research/Flickr)

What actually makes a supercomputer super? It’s always a bit relative: they must be more powerful than other computers at the time. Right now, they’re generally created by running millions of processors in parallel. Cloud computing doesn’t count, though: The physical components of a supercomputer must all be located in, essentially, the same room.

There’s a real reason for that. Even though computers can coordinate with each other from across the world, it can take seconds for the data to travel from one place another. The world’s fastest computers, though, work on a nanosecond level. “If you wait for data for two to three seconds,” Simon says, “it’s a billion times slower than what you do at the microsecond level. That ends the advantage of a big, spread out system.”

Simon and his colleagues first started ranking the world’s most powerful computers in the early 1990s. Japanese and American computers generally vied for the top spot; China first took it in 2011. (The list usually ranks systems created by governments and other public institutions—private companies, say, Google, might have more powerful systems but don’t like to say so or have them tested publicly.) Even so, America still has the most systems on the list, overall, with 231—almost half of the world’s most powerful computers, although that number has been slipping, too.

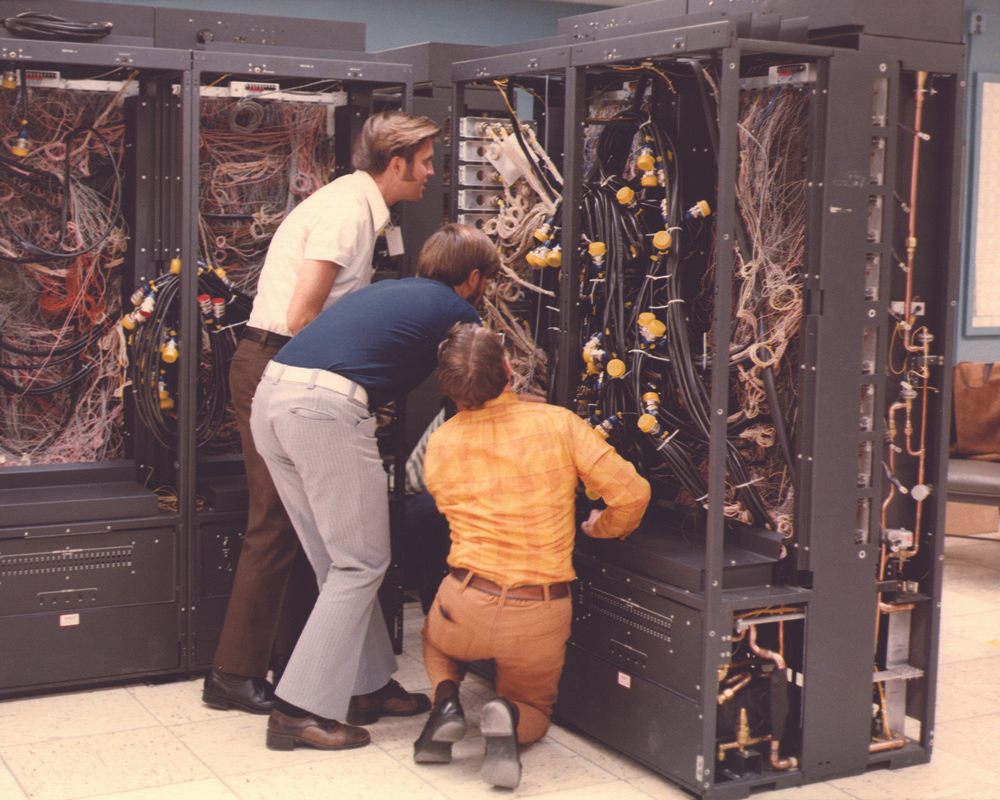

An early supercomputer installed at Lawrence Livermore National Laboratory, c. 1970 (Photo: Public Domain/WikiCommons)

An early supercomputer installed at Lawrence Livermore National Laboratory, c. 1970 (Photo: Public Domain/WikiCommons)

In the past few years, it’s been harder to make big leaps. With enough money, it’s possible to make faster and faster computers using the same technology. But that strategy is reaching its useful limits. As systems built with current technology grow bigger, they use huge amounts of power—so much that they’re prohibitively expensive to run. In 2010, for instance, a U.S. Energy Department advisory committee found that running such a supercomputer on the exaflop level would require “roughly the output of the Hoover Dam”—which has the nameplate capacity of 2,000 megawatts and produces about 4.2 billion kW-hours each year, enough to power about 350,000 homes. In a more recent report, the advisory committee listed energy efficiency as the top challenge to making an exascale system.

Right now, Tianhe-2 uses 17.8 MW to reach speeds of 33.86 petaflops. While the U.S. government wants to beat that speed—creating an exaflop computer by 2023—the aim is to do it using much, much less power, within 20 megawatts. That means an exascale system will have to be an order of magnitude more efficient than current technology.

One way to make that happen is to decrease the physical distance between the system’s components even further, by, for example, stacking the chips into small piles. “Every nanometer counts,” says Simon. Another strategy would be to replace power-hungry copper wiring with much more efficient optical interconnects—which would save loads of power but mean fundamentally altering how the computer industry build its products.

That’s one reason why it actually does matter who has the world’s most powerful supercomputer: building these systems means pioneering new technology that will eventually trickle down to the devices that the rest of us use. But also, on a simpler level, it’s just satisfying to have bragging rights: We have the world’s best computer, and you don’t.

On Obscura Day, visit Blue Waters, which, according to the University of Illinois, where it lives, is the fastest supercomputer on a university campus.

Follow us on Twitter to get the latest on the world's hidden wonders.

Like us on Facebook to get the latest on the world's hidden wonders.

Follow us on Twitter Like us on Facebook